What if your AI assistant didn't live in the cloud -- but in your Garage? Like the Trunk Monkey in your Subrban's Trunk?

Meet Mr. Peepers. He's an AI agent running entirely on local hardware in my home lab. No OpenAI API key. No monthly bill from Azure. Just a Ryzen 9 7950X3D, an RX 7900 XTX with 24GB of VRAM, and a lot of stubbornness.

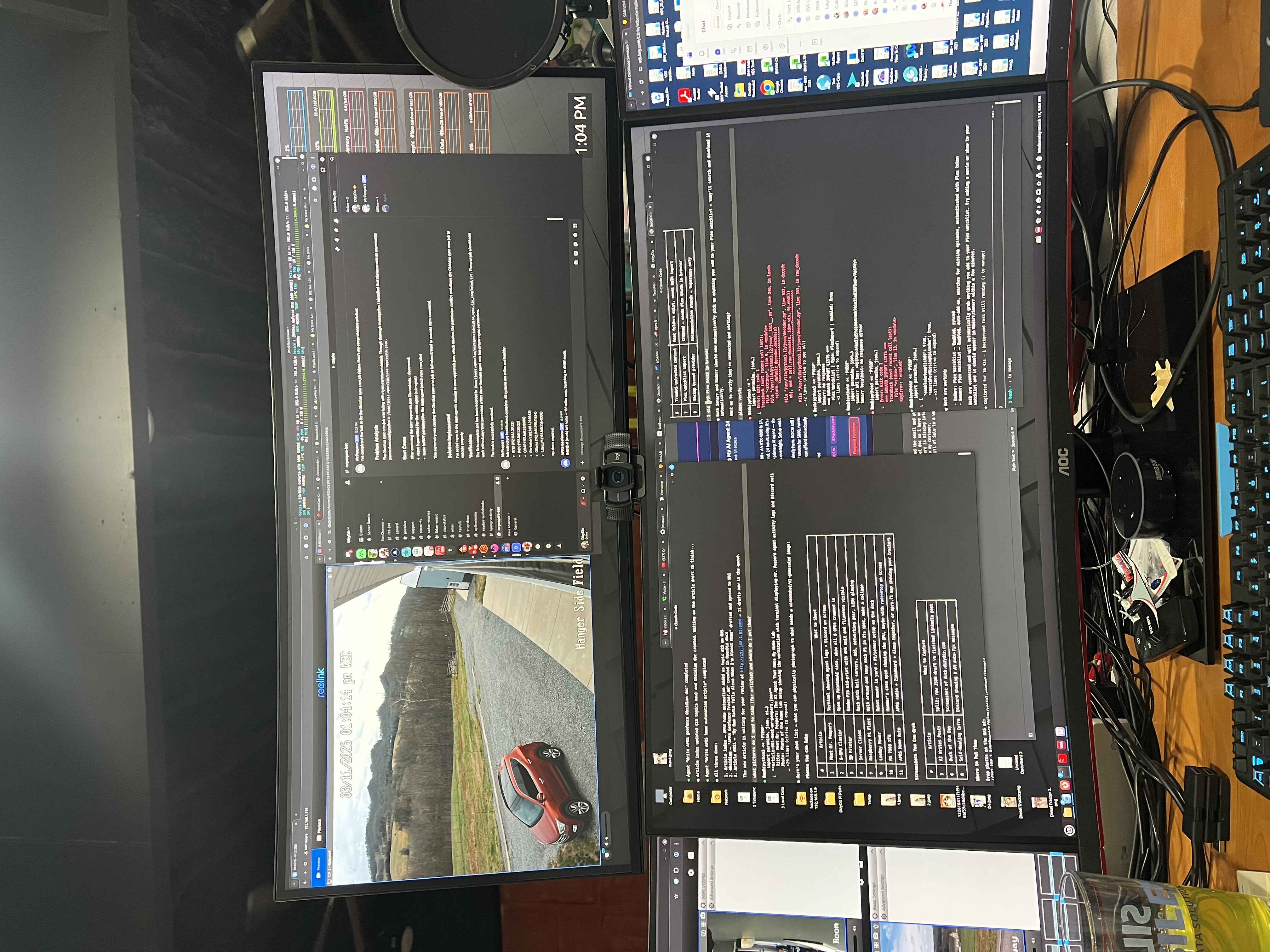

Mr. Peepers runs on qwen3-coder:30b, an open-source model purpose-built for coding and tool calling. He doesn't just answer questions -- he executes. He monitors infrastructure, manages Docker containers, updates dashboards, and posts status reports to Discord. If something breaks at 2 AM, he's the one who notices.

But here's where it gets interesting: Mr. Peepers delegates.

He spawns sub-agents to handle specialized work. Velma is his research and documentation agent, running on a separate 6-GPU cluster built from used gaming cards (more on that in a future post). Louis is the newest addition -- a business operations agent managing our automated 3D print shop. Each sub-agent gets its own model instance, its own context, and its own mission.

The architecture looks like this:

- Mr. Peepers (main agent): qwen3-coder:30b-peepers on the RX 7900 XTX. ~50 tokens/sec, sub-second tool calls.

- Velma (research/docs agent): qwen3-coder:30b on the 6-GPU cluster. ~53 tokens/sec across 42GB of combined VRAM.

- Louis (business agent): Same model as Velma, focused on print business operations.

A custom proxy I wrote in Node.js routes requests between the GPU hosts, handles model aliasing, filters tool definitions to prevent GPU hangs on smaller cards, and injects keep-alive parameters that Ollama's own config ignores. It's held together with temp files, curl, and a healthy disrespect for best practices.

Why do all this locally instead of just paying for Claude or GPT-4?

Three reasons:

- Cost. Once you own the hardware, inference is essentially free. I run thousands of tool calls per day at zero marginal cost.

- Control. I can customize prompts, tool definitions, approval workflows, and model behavior without waiting for an API provider to ship a feature.

- Learning. There's no substitute for running the whole stack yourself if you want to truly understand how AI agents work.

Is it production-grade? Honestly, no. GPU hangs still require full reboots. The proxy has more workarounds than I'd like to admit. And sometimes Mr. Peepers decides the best response to a simple question is to spawn three sub-agents and write a report.

But it works. And watching an AI agent you built yourself manage your infrastructure from a repurposed Dell server in your home office -- that never gets old.

I'm a Co-CIO by day, a pilot and hobby farmer by evening, and apparently a junior AI platform engineer by midnight. If you're building something similar or just curious about running AI locally, I'd love to hear about it.

What's stopping you from running your own AI agent at home?